Posts Tagged ‘climate change’

NCSU scientist chases tornadoes to better understand them

Sunday, October 2, 2011, 10:24 pm No Comments | Post a CommentMatt Parker, a N.C. State University associate professor, sounded almost nostalgic when he talked about the more than 700 tornadoes that were reported roaring across the South, Southeast and Midwest in April, about four times as many tornadoes as hit the U.S. during an average April.

Parker is an atmospheric scientist and has studied how tornadoes develop to help improve weather forecasts.

“This was a historic year,” Parker told science writers and educators during a Sept. 27 talk at Sigma Xi in Research Triangle Park.

A spring storm season like this year’s doesn’t come around often. That’s a good thing, considering the loss of life and the devastating destruction the tornadoes wrought.

April 2011 ranks as the most active tornado month on record, according to the National Oceanic and Atmospheric Association. A storm system that moved across Oklahoma, Arkansas, Mississippi, Alabama, Georgia, North Carolina and Virginia in mid-April killed 43 people, 22 of them in North Carolina. One of the tornadoes it spawned April 16 cut a 180-foot-long track through suburban Wake County, Parker said.

April 2011 ranks as the most active tornado month on record, according to the National Oceanic and Atmospheric Association. A storm system that moved across Oklahoma, Arkansas, Mississippi, Alabama, Georgia, North Carolina and Virginia in mid-April killed 43 people, 22 of them in North Carolina. One of the tornadoes it spawned April 16 cut a 180-foot-long track through suburban Wake County, Parker said.

A second storm system at the end of the month was even deadlier. It caused a super outbreak of tornadoes in the South that killed more than 300 people in four days, according to NOAA.

A month later, on May 22, a powerful tornado hit Joplin, Mo., killing 157 people. According to NOAA, the Joplin tornado packed winds of more than 200 miles per hour, it was nearly a mile wide and its track lasted 6 miles.

What about climate change? Could that be a cause for the historic outbreak of tornadoes this year?

“We really don’t know,” Parker said.

A tornado is a mere blip in a 100-year data set that tracks changes in the climate, he said. The increase in the number of reported tornadoes, he added, is likely due to better forecasting and warning systems, a higher population density and the increase in the number of storm chasers.

What was devastating and deadly to the people who lived in the tornados’ way could have provided scientists like Parker with a bevy of otherwise hard-to-come-by data.

In May and June of 2009 and 2010, Parker and his team of students were among about 100 scientists who tracked storms with radar, measured wind speeds, sent up weather balloons and fed the information to a database. The study, called VORTEX2, was one of the largest field studies to determine the origin of tornadoes and a follow-on to a more limited tornado hunt in 1994 and 1995. The teams had about $10 million worth of equipment at hand.

April 2011 was never part of VORTEX2′s data collection phase.

Working with tornadoes is often frustrating, Parker acknowledged. May and June 2009 were two very uneventful months - only two storm systems that generated tornadoes.

“Two thousand ten was much better,” Parker said. “On some days we had the pick of tornadoes.”

About 40 storm systems with the potential to generate a tornado, also known as super cells, and about 20 tornadoes occurred in May and June 2010, he said.

A super cell starts similarly to an ordinary thunderstorm. Warm, moist air rises amidst cooler surroundings and the moisture condensates. In an ordinary thunderstorm, the precipitation creates a cool downdraft that cuts off the warm, moist updraft within about 30 to 45 minutes. The storm dissipates.

A super cell thunderstorm develops when strong upper-level winds allow the warm, moist updraft to continue for up to six hours. The stage is set for the downdraft and the updraft to begin rotating.

But the process that produces a tornado in a super cell thunderstorm is not well understood, Parker said.

For example, strong super cells are not associated with tornadoes, he said. Storms with similar structures may differ in tornado production. And the relationship between near-ground wind fields and structural damage isn’t clear either.

Scientists hope that once the VORTEX2 data is crunched and analyzed and published, some of the questions will be answered, Parker said. Especially head-to-head comparisons of data collected from storms that generated tornadoes and storms that didn’t might be fruitful.

Goals of the VORTEX2 study are to extend the average lead time for tornado warnings from about 13 minutes currently to at least 35 minutes and reduce the false alarm rate, which is currently at about 70 percent.

Tackling the challenges of climate change modeling

Monday, February 21, 2011, 12:44 am 2 Comments | Post a CommentTrue to its mission, the Statistical and Applied Mathematical Sciences Institute in Research Triangle Park took on a tricky data- and model-driven scientific challenge in the first public talk it organized for a lay audience.

SAMSI, a collaboration of the RTP area’s three main universities, the RTP-based National Institute of Statistical Sciences and the National Science Foundation, picked climate change as a topic for the talk on Feb. 15 and invited Douglas Nychka, a leading statistician and climate expert at the National Center for Atmospheric Research in Boulder, Colo., as its inaugural speaker.

Nychka didn’t go into the depths of the criticism that has dogged data-driven climate change modeling for more than a decade and has left most Americans convinced they can’t do anything to change global warming.

Only 18 percent of Americans strongly believe global warming is real, harmful and caused by humans, according to the 2008 American Climate Values Survey.

“This is an argument about cause and effect,” Nychka said.

He did, however, say that it was very difficult to statistically reproduce the global warming trend without including greenhouse gases from fossil fuel consumption. Read more…

Seventeen Years of Discovery in Duke Forest

Tuesday, June 1, 2010, 1:22 pm 5 Comments | Post a Comment

Higher concentrations of carbon dioxide are pumped into four of the experimental rings. Photo: Will Owen

Late in 2010, an epic ecological experiment in the Triangle will begin drawing to a close when carbon dioxide stops pumping from four massive rings of towers in the Duke Forest. Since 1996, more than 250 scientists at Duke and dozens of other institutions have measured the response of this forest ecosystem to the elevated amounts of carbon dioxide expected in the Earth’s atmosphere in the future. They’ve measured tree and plant growth, photosynthesis, leaf size, soil composition, root growth, and water use in the plots bathed in elevated carbon dioxide and in three other “ambient” control plots.

The first, prototype ring was built in 1994; six more came in 1996 (three controls and three experiments). Each ring consists of 16 metal towers in a 30-meter diameter. Computer-controlled instruments in the experimental rings bathe the interior of the plot in carbon dioxide. It’s called Free-Air CO2 Enrichment, or FACE. As opposed to “chamber studies,” in which plants are studied in carefully controlled growth chambers or greenhouses, the rings are open to nature. That means that mammals and insects can circulate freely and that natural events like hurricanes, ice storms, and droughts affect the research site. Read more…

RTP researchers help track diseases linked to climate change

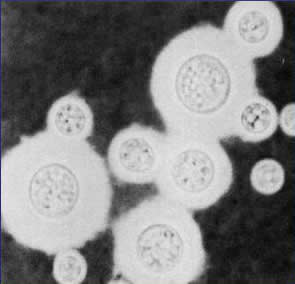

Tuesday, April 27, 2010, 8:53 pm No Comments | Post a CommentDuke University researchers suspect climate change is a reason why a deadly new version of a tropical fungus is spreading in the temperate climate of the Pacific Northwest.

In Africa, South America, Southeast Asia and Australia, crytococcus gattii infects eucalyptus trees and bothers people with compromised immune systems, such as HIV/AIDS patients and organ transplant recipients, who inhale its spores. But the strain that was first documented on Vancouver Island, Canada, a decade ago and has now spread to Seattle and Portland causes chest pain, fever, shortness of breath and weight loss in otherwise healthy people and has killed at least six of them.

In February 2007, the first North Carolina case, an otherwise healthy man, was treated at Duke University Medical Center, the Duke researchers reported in PLoS One. In a paper they published a week ago in PLoS Pathogen, the researchers wrote that the cryptococcus gattii strain in the Pacific Northwest was new, much more virulent and favored mammals.

RTI study: The cost of mandatory emissions controls

Thursday, December 10, 2009, 10:03 pm 1 Comment | Post a CommentDuring a week of climate discussions in Copenhagen and Washington, RTI International released results from a study that looks at the costs of mandatory emissions controls.

The RTI analysis is based on the “Blueprint for Legislative Action,” a plan by the U.S. Climate Action Partnership that includes mandatory reductions of CO2 emissions. The partnership, which is a group of businesses and environmental organizations, recommended emissions reductions of 80 percent to 89 percent by 2020 and a 58 percent by 2030. Read more…

The RTI analysis is based on the “Blueprint for Legislative Action,” a plan by the U.S. Climate Action Partnership that includes mandatory reductions of CO2 emissions. The partnership, which is a group of businesses and environmental organizations, recommended emissions reductions of 80 percent to 89 percent by 2020 and a 58 percent by 2030. Read more…

Acid ocean test looks to the past

Thursday, December 3, 2009, 10:03 pm 4 Comments | Post a Comment

UNC marine scientist Justin Ries holds two tropical pencil urchins grown under different seawater acidities. (Photo by Tom Kelindinst, WHOI)

Unlocking causes of past mass extinction events is a nifty – if not controversial – trick. But forecasting the future while also explaining the geologic past is even niftier. And that is just what a new study attempts to do by documenting experimental effects of ocean acidification upon shelled marine invertebrates.

The study, published Dec. 1 in Geology and led by a University of North Carolina scientist, reports a spectrum of positive to negative responses across seven major groups of calcifying marine organisms. It also offers supporting evidence for understanding patterns of past mass extinction — and survival — seen 251 million years ago at the Permian-Triassic boundary. Read more…